CFD Project Outsourcing

Outsource your CFD project to the MR CFD simulation engineering team. Our experts are ready to carry out every CFD project in all related engineering fields. Our services include industrial and academic purposes, considering the ANSYS Fluent software's wide range of CFD simulations. By outsourcing your project, you can benefit from MR CFD's primary services, including CFD Consultant, CFD Training, and CFD Simulation.

The project freelancing procedure is as follows:

An official contract will be set based on your project description and details.

As we start your project, you will have access to our Portal to track its progress.

You will receive the project's resource files after you confirm the final report.

Finally, you will receive a comprehensive training video and technical support.

What is Machine Learning?

Machine Learning is an artificial intelligence (AI) application that allows systems to learn and improve from experience without being explicitly programmed automatically. Machine learning focuses on developing computer programs that can access and use data to learn for themselves. The learning process begins with observations or data, such as examples, direct experience, or instruction, to look for patterns in data and make better decisions in the future based on the examples we provide. The primary aim is to allow the computers to learn automatically without human intervention or assistance and adjust actions accordingly.

What is the Design of the Experiment (DOE)?

Design of Experiments (DOE), also referred to as Designed Experiments or Experimental Design, is the systematic procedure carried out under controlled conditions to discover an unknown effect, test or establish a hypothesis, or illustrate a known product. It involves determining the relationship between input factors affecting a process and its output. It helps to manage process inputs to optimize the result.

Sir Ronald A. Fisher first introduced the method in the 1920s and 1930s. Design of Experiment is a powerful data collection and analysis tool used in various experimental situations. It allows manipulating multiple input factors and determining their effect on the desired output (response). By simultaneously changing numerous inputs, DOE helps identify significant interactions that may be missed when experimenting with only one factor at a time. We can investigate all possible combinations (full factorial) or only a portion of the possible combinations (fractional factorial).

A well-planned and executed experiment may provide much information about the effect on a response variable due to one or more factors. Many experiments involve holding certain factors constant and altering the levels of another variable. However, this “one factor at a time” (OFAT) approach to process knowledge is inefficient compared to changing multiple factor levels simultaneously.

As was mentioned previously, there are multiple approaches to DOE. OFAT, Full and Fractional factorial, Taguchi, and Response surface methodology (RSM). However, the RSM proves to be the best approach among the existing methods for DOE.

Design of the Experiment (DOE) or Experimental Design is a method for systematically determining the relationship between various factors affecting a process and its output. In other terms, causal analysis is used to identify cause-and-effect relationships. This information is required to manage process inputs to maximize output effectively.

DOE is an indispensable instrument for modeling, optimizing, and enhancing quality and performance. The primary purpose of a controlled experiment is to test hypotheses, identify the effects of various factors, and reveal their interaction.

The essential stages of DOE include:

– Problem Definition: Define the problem and its influencing factors.

– Design the Experiment: Determine the type of experiment, the number of factors to include, and the number of experiments to conduct.

– Conduct the Experiment: Conduct the experiments and acquire the resulting data.

– Interpret and Analyze the Results: Utilize statistical analysis to determine the importance of collected data.

There are numerous varieties of DOE, such as:

– Factorial Designs: These are experiments that examine all possible factor combinations.

– Fractional Factorial Designs: These investigations examine a subset of the possible factor combinations.

– Response Surface Designs: These experiments construct a mathematical model of a process and determine its optimal settings.

What is Response Surface Methodology (RSM)?

Response Surface methodology, or RSM for short, is a set of mathematical methods determining the relationship between one or more response variables and several independent (studied) variables. This method was introduced by Box and Wilson in 1951 and is still used today as an experimental design tool. RSM is a set of statistical techniques and applied mathematics for building experimental models. RSM aims to optimize the response (output variable) affected by several independent variables (input variables).

An experiment is a series of tests called executions. We change the input variables in each experiment to determine the causes of the response variable’s changes. In response-level designs, constructing response procedure models is an iterative process. As soon as we obtain an approximate model, we test by the good-fit method to see if the answer is satisfactory. If we don’t confirm the answer, the estimation process starts again, and we perform more experiments. In designing experiments, the goal is to identify and analyze the variables affecting the outputs with the least number of experiments.

This method achieves the best response surface by discovering each design variable’s optimal response level. In designing experiments, the goal is to identify and analyze the variables affecting the outputs with the least number of experiments.

Response Surface Methodology (RSM) is a collection of statistical and mathematical techniques beneficial for modeling and analyzing problems. The objective is to optimize a response influenced by multiple variables.

In simplified terms, RSM is utilized when optimizing a process with multiple input variables. It aids in the development, enhancement, and Optimization of processes. RSM investigates the connections between multiple explanatory variables and one or more response variables. RSM relies on carefully planned experiments to determine the optimal response.

Here are a few important facts about RSM:

– It is a sequential operation. It involves executing the experiment, estimating the coefficients, predicting the response, evaluating the model’s adequacy, and identifying the optimal conditions.

– It is an efficient instrument for creating, enhancing, and optimizing processes.

– It is frequently employed in situations where multiple input variables have the potential to influence a performance metric or quality characteristic of a system.

How can Optimization (DOE) CFD simulation be applied in Engineering and Industries?

Design of Experiments (DOE) is frequently used to optimize CFD (Computational Fluid Dynamics) simulations, which can be applied to various engineering disciplines and industries. Here are some illustrations:

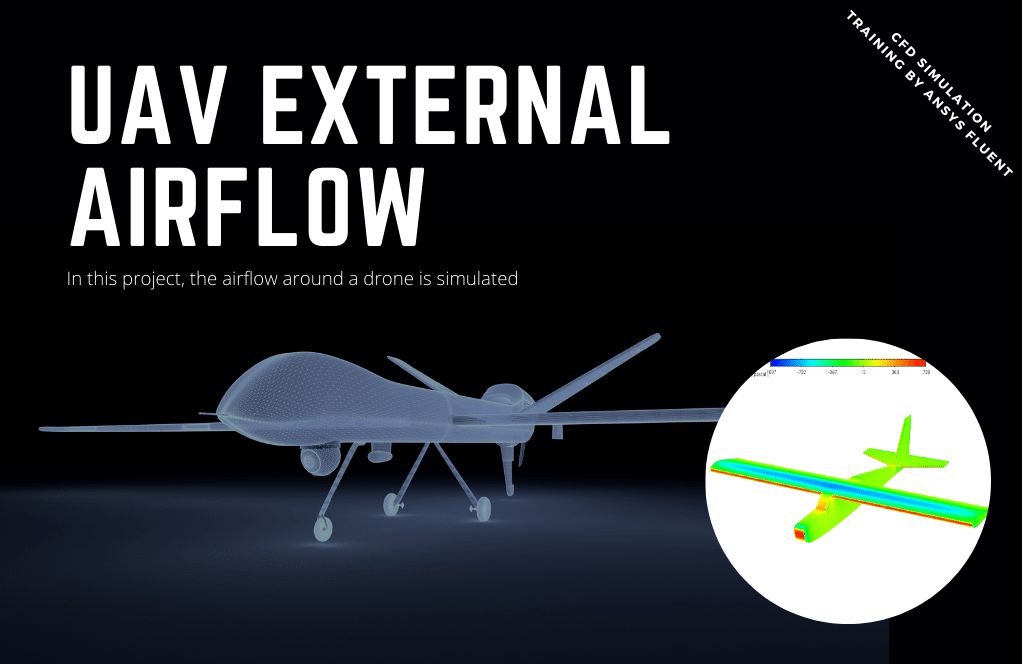

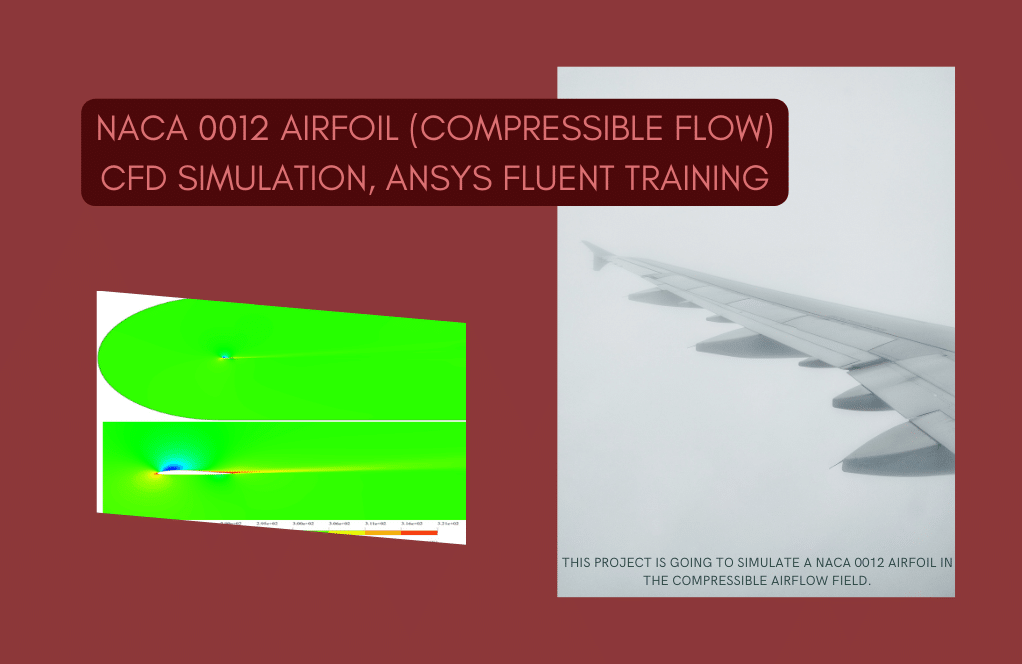

Aerospace Engineering

CFD optimization is used to enhance aircraft and spacecraft design in Aerospace Engineering. It aids in reducing drag, increasing lift, and enhancing aerodynamic efficacy overall.

Example: maximizing fuel efficiency by optimizing the size and configuration of a plane’s wing.

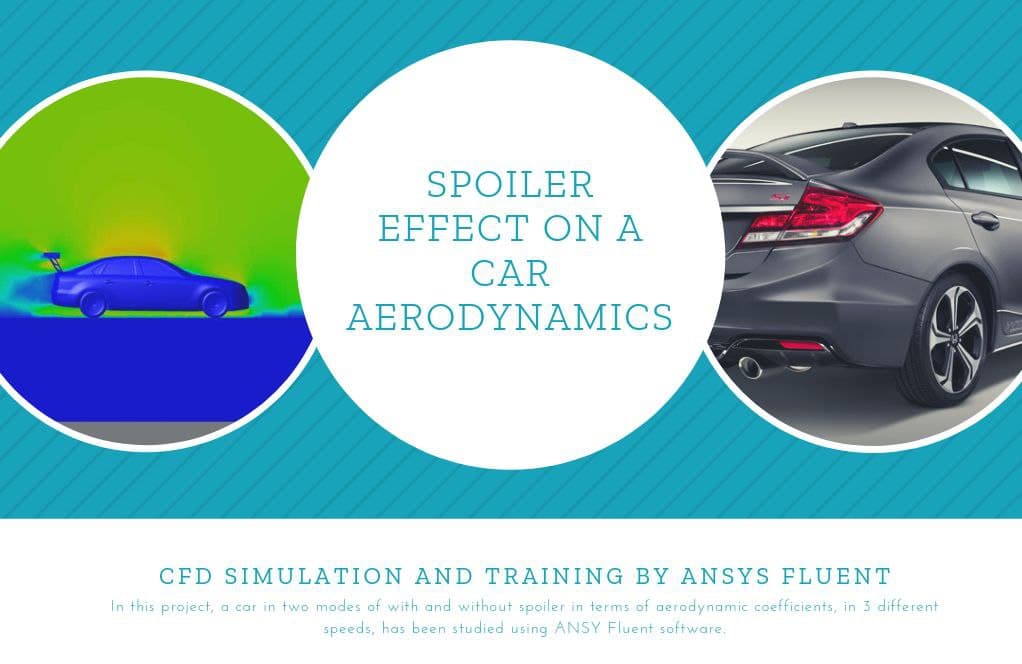

Automotive Engineering

CFD is utilized in Automotive Engineering to optimize the vehicle design. It helps improve fuel economy, reduce emissions, and increase safety.

Example: Optimizing the circulation around a vehicle to reduce drag and increase fuel economy.

Oil and Gas Industry

CFD is used to optimize the design and operation of equipment such as pipelines, separators, and heat exchangers in the oil and gas industry.

Example: Optimizing the design of a pipeline to reduce pressure loss and increase flow rate.

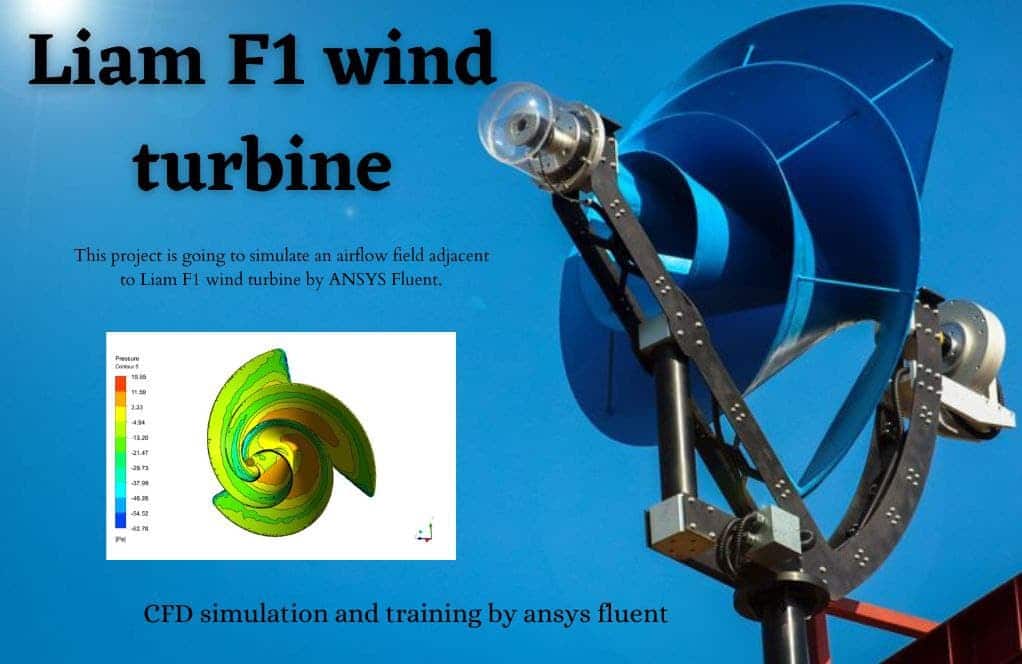

Energy Sector

CFD optimization is used in designing and operating power facilities, wind turbines, and solar panels.

Example: maximizing power output by optimizing the design of a wind turbine blade.

Chemical Industry

CFD is used to optimize the design and operation of chemical reactors, distillation columns, and other chemical industry processes.

Example: optimizing a chemical reactor’s design for maximum conversion and selectivity.

Biomedical Engineering

CFD optimizes medical device design and comprehends the human body’s fluid flow.

Example: Optimizing the design of a heart valve to enhance blood flow is one example.

In each of these applications, DOE is indispensable. It enables engineers to systematically alter the design’s parameters and comprehend their effect on performance. This facilitates identifying the optimal design that fulfills the specified performance criteria.

MR CFD services in the Optimization (DOE) Simulation for Engineering and Industries

With several years of experience simulating various problems in various CFD fields using ANSYS Fluent software, the MR CFD team is ready to offer extensive modeling, meshing, and simulation services. Simulation Services for Optimization (DOE) CFD simulations are categorized as follows:

- Direct and Indirect optimization process for different fluidic systems

- ML models for different projects to predict the behavior of the related system

- Calculating different mathematical correlations for predicting the behavior of various types of systems

- Obtaining the optimal state of a process inside a system to reduce the overall costs

- Designing various equipment for maximum efficiency

MR CFD is a company that offers a variety of Computational Fluid Dynamics (CFD) and Finite Element Analysis (FEA) simulation services. MR CFD provides the following services within the context of Optimization (DOE) Simulation for Engineering and Industry:

– Design of Experiments (DOE): MR CFD employs DOE methods to determine the effect of various design variables on the performance and efficacy of a system or process. This facilitates the identification of the most influential factors and the optimization of the system based on these factors.

– Optimization Techniques: MR CFD uses various optimization techniques, including Genetic Algorithm (GA), Particle Swarm Optimization (PSO), and Response Surface Method (RSM), to identify the optimal solution to a given problem.

– Multi-Objective Optimization: In many engineering problems, multiple objectives conflict. MR CFD employs multi-objective optimization techniques to determine the optimal solutions for trade-offs.

– CFD Simulations: MR CFD executes CFD simulations for various engineering applications. These simulations assist in understanding the fluid flow behavior, heat transfer, and other physical phenomena in a system.

– FEA Simulations: MR CFD also conducts FEA simulations to evaluate a system’s structural integrity, thermal performance, and other aspects.

– Consulting and Training: MR CFD offers consulting and training services to assist clients in understanding and implementing optimization and simulation techniques in their projects.

The optimization and simulation services provided by MR CFD are pertinent to a wide range of industries, including automotive, aerospace, energy, oil and gas, HVAC, and many others.

Optimization (DOE) in ANSYS Fluent

Optimizing a product is one of the most important stages of product production. In the past, trial and error strategies were used to produce more stable products with better performance. But today, with the development of technology and to reduce production costs, trial, and error methods aren’t appropriate, and there is a need for more reliable methods to design and optimize products before the production stage. Today, software with different CFD methods is available to engineers to design and analyze the model before making the real model and applying the desired optimizations.

Using ANSYS, one can perform two types of Optimization. 1-Direct, 2-Indirect. Direct Optimization predicts the behavior of a system without any intermediary step. In contrast, indirect Optimization needs the data obtained by the RSM to provide the user with the correct mathematical function for predicting the system behavior.

Design of Experiments (DOE) is a method for systematically determining the relationship between the factors influencing a process and its output. In other words, it determines the relationship between causes and effects. This information is necessary for managing process inputs to optimize output.

In ANSYS Fluent, you can optimize your simulations using DOE. Here is a detailed guide on how to accomplish this:

– Configure your model: Configure your model as you would typically do.

– Establish your criteria: To create a new parameter set, navigate to Project Schematic > Parameters > New Parameter Set. Here, you can specify the parameters of your investigation.

– Specify your DO: Navigate to Project Schematic > Design Exploration > New Experiment Design. Select the type of DOE (Full Factorial, Central Composite, etc.) you wish to conduct. Select the parameters you previously defined and specify their range.

– Execute your DOE: Once your DOE has been defined, you can execute it by right-clicking it in the Project Schematic and selecting Update.

– Analyze your findings: Once the DOE has completed its execution, you can analyze the results in the Response Surface window.

Steps in DOE for a CFD project

Selection of factors and levels: Choose the factors that might influence the output. For each factor, choose two or more levels (values).

Design creation: Create a design matrix that lists the combinations of factor levels for which you will run simulations.

Perform simulations: Run the CFD simulations for each combination of factor levels.

Analysis: Analyze the results to see which factors affect the output most.

Optimization: Based on the results, optimize the process by setting the factors at their optimal levels.

Optimization (DOE) MR CFD Projects

DOE is a systematic method to determine the relationship between the factors affecting a process and the output of that process. In other words, it is used to find cause-and-effect relationships. This information is needed to optimize the processes, i.e., to make them as efficient and robust as possible.

In CFD simulation, DOE can be used to:

– Identify the most influential parameters on the CFD simulation results.

– Reduce the number of simulations needed, thus saving computational resources.

– Understand the interaction effects between different parameters.

Combustion Chamber Performance Optimization

What am I taught by this tutorial?

Step 1

In the first section of this tutorial, prepared by seasoned Engineers at MR-CFD, you will learn about various DOE methods, including RSM and its history. Next, the advantages and disadvantages of these methodologies relative to one another and their theoretical aspects will be discussed. Therefore, you can rest assured that you will acquire the necessary theoretical foundations in the introductory section even if you have no prior experience with DOE or Optimization.

Step 2

In the second phase, ANSYS software is used to conduct the RSM optimization procedure. This project will demonstrate how to implement this method to optimize various combustion chamber parameters incrementally. For instance, we will start from scratch by designing the combustion chamber’s geometry and demonstrate how you can parametrize your design. In the subsequent phase, you will observe how meshing occurs over the specified geometry. Next, we will describe how to configure the Fluent software and define additional parameters. Then, we will demonstrate how to conduct a parameter correlation process to determine which input parameters significantly affect our output parameters to reduce the number of input parameters and, consequently, our computational time by omitting the input parameters that are not required.

Using the Central Composite Method (CCD), a subset of RSM, we generate the design point chart, which consists of all the experiments required for Optimization by defining the investigation scope for each parameter. In other words, indirect Optimization in ANSYS extracts a mathematical function that can determine the system’s behavior from the data generated by RSM.

Step 3

The direct optimization procedure is explained in detail in the following section. In this form of Optimization, as opposed to the previous method (RSM), the design points are generated based on the software’s requirements and a predetermined algorithm. As the optimization procedure advances, the software may determine that more sampling points are required to predict the mathematical function of the system precisely. In other words, unlike RSM-based Optimization, the entire optimization process is conducted in a single step. After the process, ANSYS will provide you with three candidate points representing the optimal solutions for your system based on the user-defined objective(s) (i.e., the conditions you want your model to satisfy).

In this endeavor, the combustion process within a combustion chamber is simulated, and parameters such as the rate of heat production, the formation of pollution, etc., are monitored. (The input and output parameters are listed in the table below.) As stated in the preceding paragraphs, this project aims to optimize the geometrical parameters of the combustion chamber to achieve goals such as maximizing the rate of heat production while minimizing the amount of pollution produced. This project examines two categories of Optimization: indirect Optimization using the RSM method and direct Optimization. The CCD method generates the design points required for the RSM analysis in the indirect optimization phase. Then, we will identify the model’s most effective input parameters through a correlation process. Next, we will demonstrate how to optimize the input parameters of the chamber based on the data generated by the RSM analysis.

Direct Optimization

Optimization of a Compressor Cascade Using MOGA

A high-speed compressor cascade wind tunnel is used to investigate secondary flow phenomena occurring between the corners and sidewalls of axial compressors. This undertaking involves the MOGA method-based optimization of a compressor cascade. In the first phase of this project, we simulated a sectional compressor cascade.

The problem was then optimized for three input parameters and three output parameters. Input parameters include v_in (velocity inlet), alpha_degree (angle of attack), and pitch. Additionally, the drag force, lift force, and beta_degree are output parameters. This project’s objective function is defined such that the drag force is minimized and equals zero, the lift force is maximized and equals 0.07, and the beta angle is equal to -12 degrees.

Machine Learning and Optimization Application in Industrial Companies

Machine Learning in Industrial Companies

Machine Learning is a subset of Artificial Intelligence (AI) that provides unprogrammed learning and improvement from experience. ML can be used in industrial companies for the following:

– Predictive Maintenance: Machine learning models can predict machine failures by analyzing sensor data. This serves to reduce maintenance costs and prevent downtime.

– Quality Control: Machine learning can identify anomalies and deviations from standard procedures, ensuring product quality.

– Supply Chain Optimisation: Machine learning algorithms can analyze historical data to forecast demand, optimize inventory, and enhance supply chain effectiveness.

Optimisation within Industrial Firms

Optimization is the process of maximizing the utility of a situation or resource. In industrial enterprises, Optimization can be applied in the following areas:

– Production Planning: Optimization techniques can be used to determine the optimal production schedule, workforce allocation, and resource utilization.

– Logistics Optimisation: Optimisation can reduce transportation and storage costs while maintaining service levels.

– Energy Efficiency: Optimization techniques can reduce costs and energy consumption.

In conclusion, implementing Machine Learning and Optimization in industrial organizations can substantially enhance productivity, cost reduction, and quality control.

MR CFD Industrial Experience in the Optimization (DOE) Field

Some examples of Optimization (DOE) industrial projects recently simulated and analyzed by MR CFD in cooperation with related companies are as follows:

Ramjet Engine of a SR-71 Blackbird, Design and Combustion Optimization

An air-breathing jet engine, known as a ramjet, compresses incoming air instead of utilizing a rotary compressor by propelling the engine forward. Because a ramjet cannot operate at zero airspeeds, it cannot be used to power an aircraft throughout the entire flight. A ramjet-equipped aircraft requires additional propulsion to accelerate it to a speed where the ramjet can provide thrust.

In the combustor of a ramjet, thrust-generating combustion occurs at subsonic speeds. At supersonic velocities, the aircraft inlet must slow the air entering the engine. Due to shock waves in the inlet, the propulsion system’s performance suffers. Over Mach 5, ramjet propulsion is relatively inefficient.

In this circumstance, fuel is essential. When considering other advantageous factors, such as the fact that the air’s overall temperature is high at high Mach numbers, hydrogen’s well-known relatively brief inflammatory delay is particularly advantageous.

This simulation considers an SR-71 Black Bird traveling with a Mach number 3.3. The objective is to model the ramjet engine to investigate its parameters and capture what occurs there. In the subsequent step, three crucial parameters are chosen, and the solution is optimized using the DOE method in the software ANSYS Workbench.

To optimize the device performance and to get the maximum use of fuel and consequently the highest propulsion number, the Design of Experiment study is carried out on the solution. Three parameters that significantly impact the results are chosen to investigate their effects. The parameters are as follows:

- Energy mass Flow velocity

- Injection angle

- The distance from engine inlet to injection port

Finally, three-dimensional plots of the parameters and their influence on the thrust of the ramjet are generated. The DOE model also gave us the minimum and maximum quantity of the parameters considering their interactions.

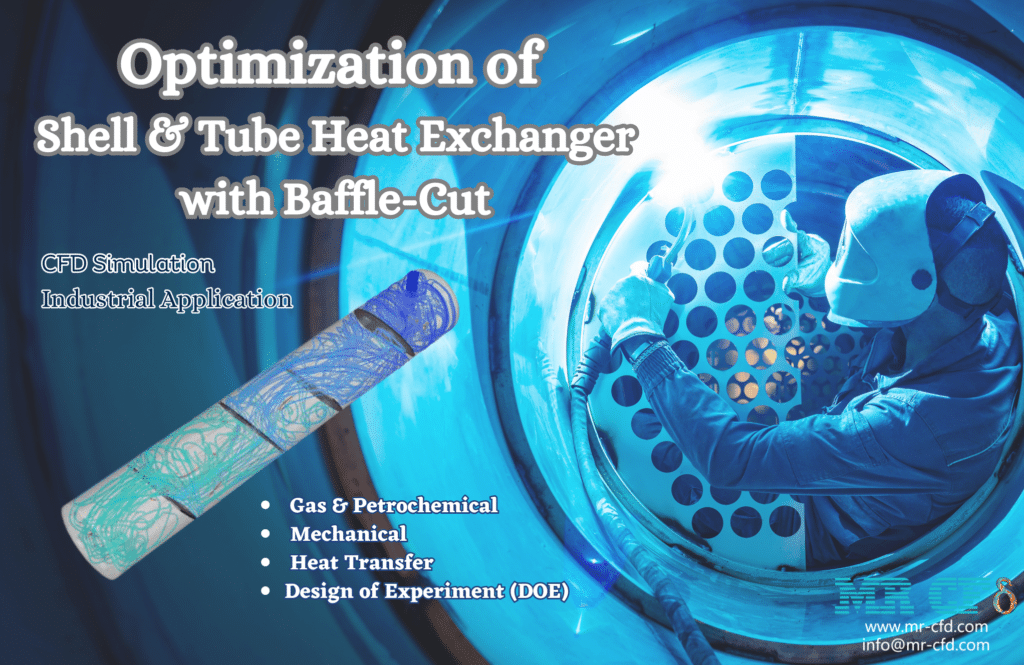

Optimization of Shell & Tube Heat Exchanger with Baffle-Cut

Before the development of modern optimization software, engineers and scientists utilized classical optimization techniques to find optimal solutions. For clarity, let’s consider an industrial and practical case study involving a shell-and-tube heat exchanger, as depicted in the figures below. This shell and tube heat exchanger has five heated tubes and four baffle cuts.

We intend to maximize heat transfer while minimizing pressure decrease. Only the mass flow of the inlet water, the baffle-cuts’ angle, and the hot tubes’ diameter can be altered. Previously, in the classical optimization procedure, we simulated ten designs, for example, and then chose the best-case scenario, but there was a problem.

There were more than one hundred potential designs, but we only tested ten before selecting the optimal design! Therefore, this cannot be considered an actual optimization! We now have an option known as the Design of Experiment (DOE). As stated, we can select the best design among all possible designs.

The fundamental concept of DOE is to controllably vary the values of the design variables and observe the resulting changes in the response variable. It entails several stages, which will be discussed in the following section using the same heat exchanger issue as an illustration.

A shell and tube heat exchanger employs a shell (a large pressure vessel) to contain a bundle of tubes through which a fluid is circulated to transfer heat to another fluid. It is commonly used to heat or cool vast fluids in industrial applications. We intend to determine the optimal design for a shell-and-tube heat exchanger with baffle cuts using ANSYS Workbench modules.

We can only alter the mass flow of the inlet water, the baffle cuts’ angle, and the hot pipelines’ diameter. In Optimization, these three variables are known as input parameters/variables. In addition, we can modify the input parameters within a predetermined range based on experience, feasibility, limitations, predictions, etc.

The ANSYS Workbench software has performed every stage of Optimization. This Optimization has been done using Multi-Objective Genetic Aggregation. In addition, the CCD algorithm is employed as a Design of Experiments step and Genetic Aggregation as an RSM step.

You may find the Learning Products in the Optimization (DOE)CFD simulation category in the Training Shop. You can also benefit from the Optimization (DOE) Training Package, which is appropriate for Beginners, Intermediate, and Advanced users of ANSYS Fluent. Also, MR CFD is presenting the most comprehensive Optimization (DOE) Training Course for all ANSYS Fluent users from Beginner to Experts.

Our services are not limited to the mentioned subjects. The MR CFD is ready to undertake different and challenging projects in the Optimization (DOE) modeling field ordered by our customers. We even carry out CFD simulations for any abstract or concept Design you have to turn them into reality and even help you reach the best strategy for what you may have imagined. You can benefit from MR CFD expert Consultation for free and then Outsource your Industrial and Academic CFD project to be simulated and trained.

By outsourcing your Project to MR CFD as a CFD simulation consultant, you will not only receive the related Project’s resource files (Geometry, Mesh, Case, and Data, etc.), but you will also be provided with an extensive tutorial video demonstrating how you can create the geometry, mesh, and define the needed settings (preprocessing, processing, and postprocessing) in the ANSYS Fluent software. Additionally, post-technical support is available to clarify issues and ambiguities.